By David M. Boothe, CAS

I heard from a colleague I have known for many years, who had recently taken over operations at a Central American television station. While he has a good working knowledge of audio, he asked for information about mixing, levels and metering.

In the on-going process of trying to professionalize the operations here at the station, I am tackling the issue of QC on our audio levels. We frequently transmit distorted, saturated audio, but the reasons for it are harder to nail down than I wish they were.

One issue I’m encountering is the difference in meters between tape machines. My Betacam SP recorder players have the typical LED display where the high end on the read out is at about 5 over zero. I’ve always understood that tone should register at zero, and that the program should read a little below zero with only the highest, occasional peaks registering over zero, or briefly into the red.

But I’m finding that the programs we get on digital tapes [DigiBeta or DVcam] read differently. The machines themselves have zero at the very top of the read out, and tone plays at 20 below zero. The program plays with peaks approaching 10 below zero.

Is the standard different now with these digital media?

Although we don’t often work with Beta SP or DigiBeta in radio, the problems he was experiencing regarding digital vs. analog metering and levels are applicable to us in radio and production. And as my colleague’s personnel discovered, misunderstanding these issues can lead to undesirable results.

SOME BACKGROUND

Audio levels and metering have became somewhat chaotic when we first started moving to digital production in the 1980s. Digital made obsolete many standards and practices that had become familiar in the analog world. At the same time, digital imposed some new and unfamiliar challenges.

Until the last few years, there have been few standards, in the old sense of the word, for levels and metering in digital production. The situation has improved as some common practices have gotten more… common. Also, a few manufacturers and individuals have made efforts to impose order where none has existed. More on this later.

Let’s review the two basic reasons we use meters at all. The first reason is obvious – to let us know what levels we are running through our equipment, avoiding distortion at one extreme or excessive noise at the other. The second reason may not be quite so obvious. It is very helpful to see a visual representation of the levels our ears are hearing. This helps us keep consistent and comfortable levels over a long period of time. With digital audio, these two goals are often at odds with one another.

ANALOG VS. DIGITAL

The key to understanding these conflicting requirements is the relationship between reference levels and meter ballistics. My colleague discovered this for himself, though he may not have realized it.

We often think of the old style, mechanical VU meters as providing a standard reference level, which they did, if properly set up. If the meter adheres to the IEEE specification (IEEE 152-1991), it is properly called a “standard volume indicator” (SVI) and has standardized ballistics. Many characteristics are standardized, including things like overshoot and reversibility error, as well as the iconic A and B scales. Much older equipment, especially if it was less expensive, had so-called “VU meters” that were not SVIs. A $100 cassette recorder may have had meters that looked like VU meters, and were called VU meters by the manufacturer, but the resemblance was only superficial. Real mechanical VU meters, that conform to IEEE 152-1991 (or before 1991, IEEE 152-1953) are expensive. And big.

In Europe, organizations such as BBC, DIN, and EBU, developed standards which had their own reference levels, ballistics and scales, that differed from each other. These meters are referred to as “peak reading” We refer to VU meters as “averaging.”

Ballistics of VU meters, or SVIs, are often referred to as “syllabic.” Originally developed by Bell Telephone Laboratories, NBC, and CBS in the 1930s, their integration time was similar to that of the human ear. In other words, the VU meter provided a visual representation of how the ear perceives volume. A fast transient such as a snare drum hit, does not register accurately on a VU meter because the transient is too fast and the response time of the meter too slow for the meter to track the transient accurately. With analog tape, we record percussive material at lower levels, as shown on the VU meter, than for material such as voice. You could record a close-miked tambourine so that it peaked at 0 VU, but you only did it once if you didn’t want to sell shoes for living. Even so, analog systems are usually more forgiving of brief or low level overloads, than are digital systems.

The European-style “peak programme meters” (PPMs) were designed to help eliminate this problem, particularly in broadcasting. Their rise times (specified by delay time and pulse response in IEEE Std 152-1991) are much faster, though still not as fast as some very fast transients, since the PPMs originally were mechanical systems. The choice of rise time was based on models of human hearing which show that most people don’t really notice distortion for very brief periods. However, PPMs do give a much better indication of what levels are actually being printed to tape or sent to air. In order to make the meters readable, the return time is slower than for VU meters. (Return time is the time it takes for the indicator to fall a specific amount after signal is removed.) Ballistics of PPMs do not do a good job of giving an accurate estimation of how consistent your levels will sound to the human ear.

DB AND REFERENCE LEVELS

As you probably know, a decibel (dB) is just a ratio. So to say simply that a tone is recorded at 0 dB, -3 dB or +6 dB with no reference, is meaningless. A dB figure must refer to something to be useful. With the a VU meter, the reference mark (0 VU) refers to a specified AC voltage across a specified impedance which is typically 4 or 8 decibels above 1 mw (.775 volt across a 600 ohm load). The rest of the recording or broadcast chain is calibrated to that standard.

In digital devices, we often refer to decibels referenced to “full scale,” abbreviated “dBFS.” Full scale is when the digital word describing the level is all ones and no zeros. You can’t go higher than that. Therefore, a level relative to full scale will always be at or below 0, such as -20 dBFS. In digital systems 0 dBFS is the only level that has any consistent and easily distinguishable characteristics: if you attempt to go beyond it, catastrophe awaits. So 0 dBFS is really the only reference that makes any sense in a digital system.

With modern electronic metering, dangerous peaks can now be more accurately displayed, since we don’t have the inertia of a mechanical system. We can easily make sure our signal stays healthy inside the equipment. But what about the other goal – helping us maintain consistent levels at the listener’s ear? The metering that gives us an accurate picture of the health of our signal, does not really correlate to what our ear hears. We need a syllabic meter to do that. Simply slowing down the meters won’t really help, by itself. In order to maintain adequate headroom in the meter, the readings would be so far below 0 dBFS that we could not easily tell how consistent our levels are.

Let’s lower the reference level when we slow the meter ballistics, change the scale so that we can see more detail around the reference level, and less at the extremes (like the old VU meters did). Now we have something that correlates with our ear. That’s exactly what has happened in modern metering systems. In recent years, -20 dBFS has become a common reference point for syllabic meters. You can think of this as having the same usefulness as the old “0 VU.”

SOME OTHER CURRENT PRACTICES

Another currently accepted practice is to limit peaks to about -8 or -6 dBFS, giving us +12 to +14 dB of headroom above our -20 dBFS reference. Now doesn’t this “waste” headroom? After all there are still 6 to 8 dB available that we aren’t using. The answer is – it depends on what you mean by “waste.” In the real world, we cannot assume that every machine that plays back your audio or converts the digital to analog will do so perfectly. If we have 0 dBFS peaks, some machines may distort due to miscalibrated converters or problems with the analog circuitry. Also, we do not know how much headroom a downstream piece of equipment will have at playback, so it’s better to play it safe.

This accepted practice — reference at -20 dBFS and maximum peaks at -8 dBFS — is what major television networks seem to want nowadays and so has become pretty much a de facto standard. I use this standard when I mix for radio, as well.

But why can’t we have it all? We need to meter the peaks for the benefit of the recording medium. And we need a syllabic metering system for the sake of the listener, including the guy that mixes (that would be you or me).

Well, we can have it all, with several currently available metering systems. Having at least one set of reference quality meters is a requirement for professional work. It is not optional. Sometimes you get lucky and such meters will come with your console or DAW, but not always. How you calibrate these meters is integral to how you set up the level structure of your audio system. Good, professional meters will have clear reference position that works (with a sine wave) for both the peak and average metering. They will also tell you how to calibrate the meters relative to your house levels. And they will display both peak and average levels simultaneously, with appropriate ballistics to each.

WHAT I DO – YOUR MILEAGE MAY VARY

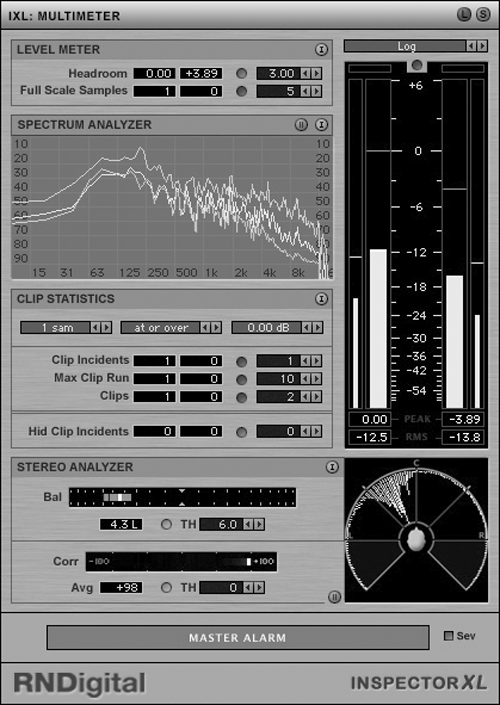

Lately, I have been using two metering systems when I mix: a pair of analog Dorrough 40-A and the Inspector XL package from Roger Nichols Digital, which incorporates Bob Katz’ K-system. Inspector XL is an RTAS and VST plug-in. The Dorrough is a rack mount hardware unit that uses LEDs for display. Either of these can give good results by itself, as can any of a several others on the market from companies such as Coleman, Digidesign, Logitek, Sonalksis, and Waves.

When using the Inspector XL plug-in, I use the -20 K-scale. This has a reference at -20 dBFS marked “0” with positive numbers above it and negative numbers below it. With this scale I can easily see where my levels are at any time, both peak and average, and what the highest levels were (peak & average) within the last n seconds (n is adjustable).

Currently, I am “mixing in the box,” so I calibrate the Dorrough 40-A meters so that their reference mark is equivalent to -20 dBFS (as indicated by Inspector XL) coming out of the DAW’s analog hardware. If I were mixing to external equipment, I would also make sure this level is + 4 dBm.

By calibrating in this way and using a bus limiter at around -6 dBFS or -8 dBFS, I can focus mainly on balances and maintaining consistent average levels, with only an occasional check of the peaks. That’s easy enough with either system I’ve mentioned. The advantage is that I can mix instinctively, by ear, getting consistent levels over the long haul and still stay out of digital danger.

NORMALIZATION

Normalization calculates the difference between the highest level in the file and a predetermined level (often 0 dBFS), then applies that amount of gain to the entire file. This sounds like a good idea. However, there are a few less-than-obvious issues to consider. Let’s consider some reasons why we normalize audio files.

One rationale goes like this. At the lower end of the dynamic range, there are fewer bits to describe the waveform, so the dynamic range is smaller. In other words, the audio is not taking advantage of all the bits. But once the file is recorded, the amount of dynamic range used is already fixed. If the audio was created at a lower level (allowing for less dynamic range), normalizing does not increase the dynamic range of the recorded signal. It simply moves everything closer to 0 dBFS. However, normalization can increase the dynamic range available to later operations.

Another rationale for normalization is to make all sound files the same level. Normalization uses only the peak audio levels to do its calculations. So from this, and the previous discussions, we realize that normalization, by itself, cannot make two sound files sound equally loud.

Normalization simply raises or lowers the level of an entire sound file by a predetermined amount. It follows that any noise present in the audio is raised by the same amount as the desired audio. Any inaccuracies due to recording in only the lowest bits will similarly be raised by the same amount as the desired signal.

Another precaution is to normalize to a level slightly lower than 0 dBFS. Let’s say you normalized cleanly to 0 dBFS and afterward decided to boost 2 kHz by 2 dB using a peak EQ. Guess what happens when you have a 0 dBFS peak at 2 kHz?

Digital audio has increased our production flexibility. I don’t think any of us would want to go back. However, it also increased confusion and inconsistencies with meters and levels, by making widely accepted analog practices obsolete. With new tools that have come along in recent years and thoughtful application of analog methods to our digital reality, we can again keep levels consistent and clean.

♦